Abstract Interictal epileptiform discharge (IED) and its spatial

distribution are crucial for the diagnosis, classification and

treatment of epilepsy. Manual annotation by electroencephalography (EEG) experts led to a lack

of publicly available

datasets from multiple epilepsy centers, impeding the development of automatic IED detection. We

present

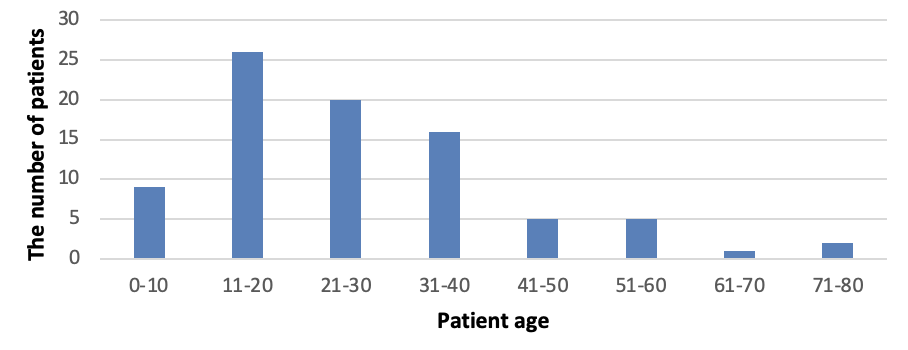

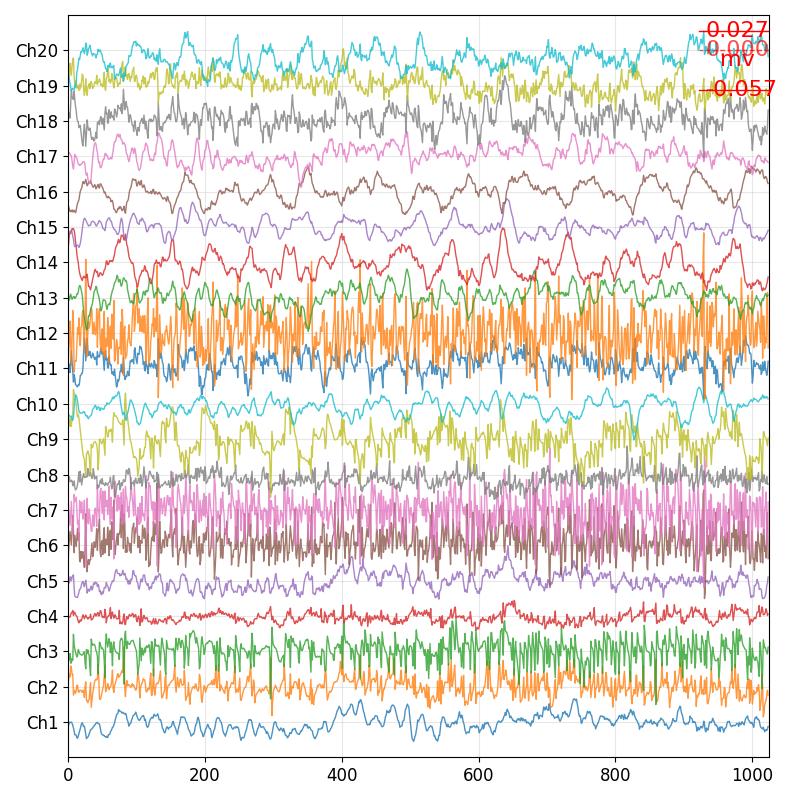

an EEG database containing annotated interictal epileptic EEG data from 84 patients. We

extracted 20-minute continuous

raw EEG data from each patient, amassing 28 hours in total. IEDs and the state of consciousness

(wake/sleep) were

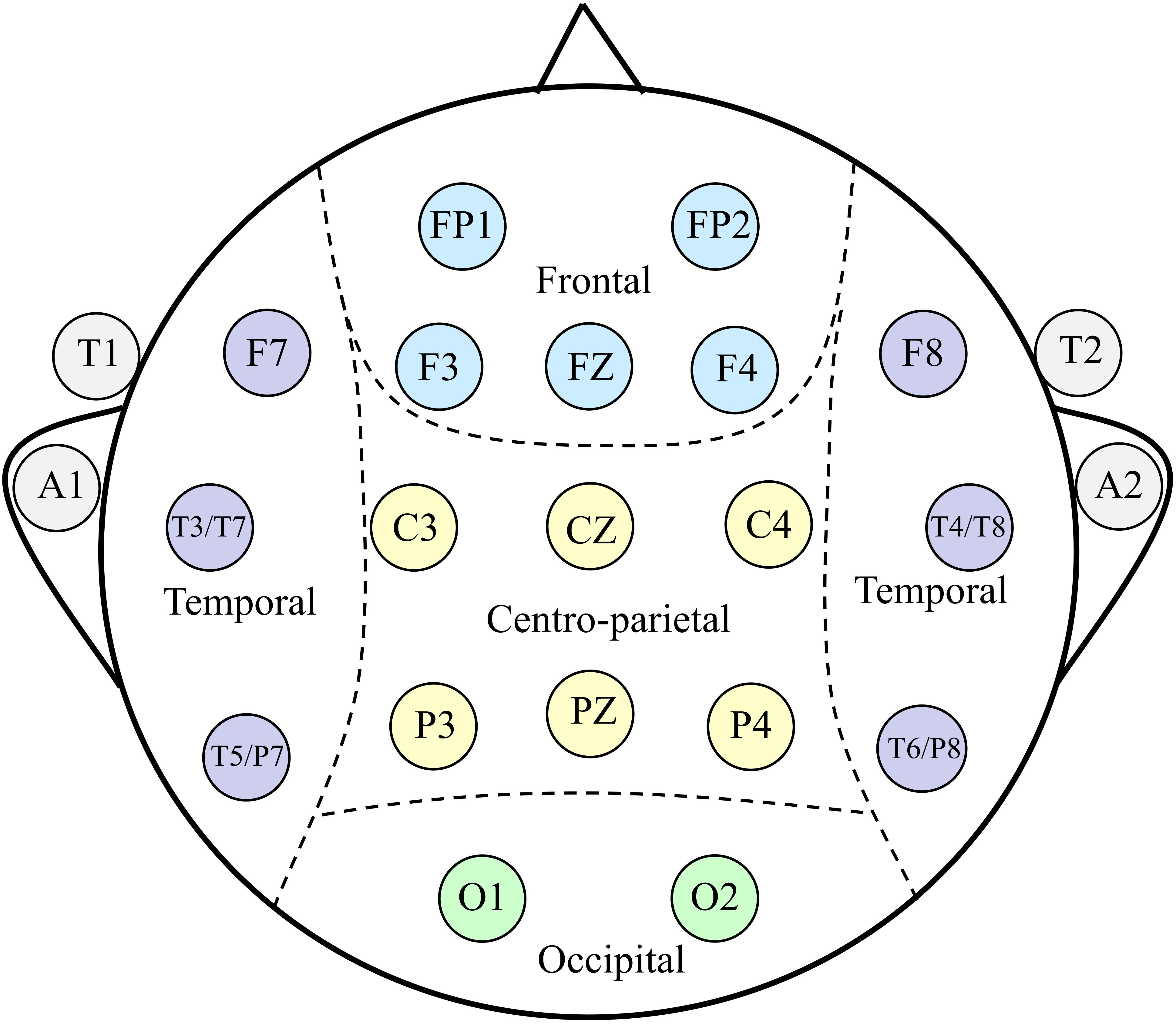

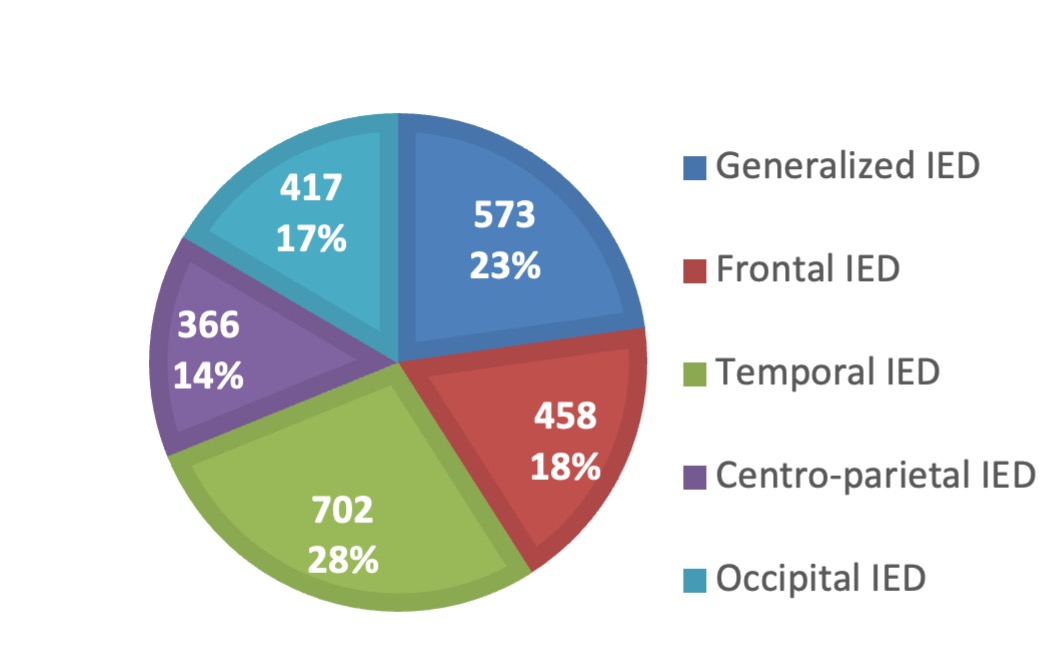

meticulously annotated by at least 3 EEG experts. Based on the occurrence regions, the

discharges are categorized into

five types: generalized IED, frontal IED, temporal IED, occipital IED, and centro-parietal IED.

All EEG data were

segmented into 4-second epochs. This resulted 2,516 IED epochs and 22,933 non-IED epochs

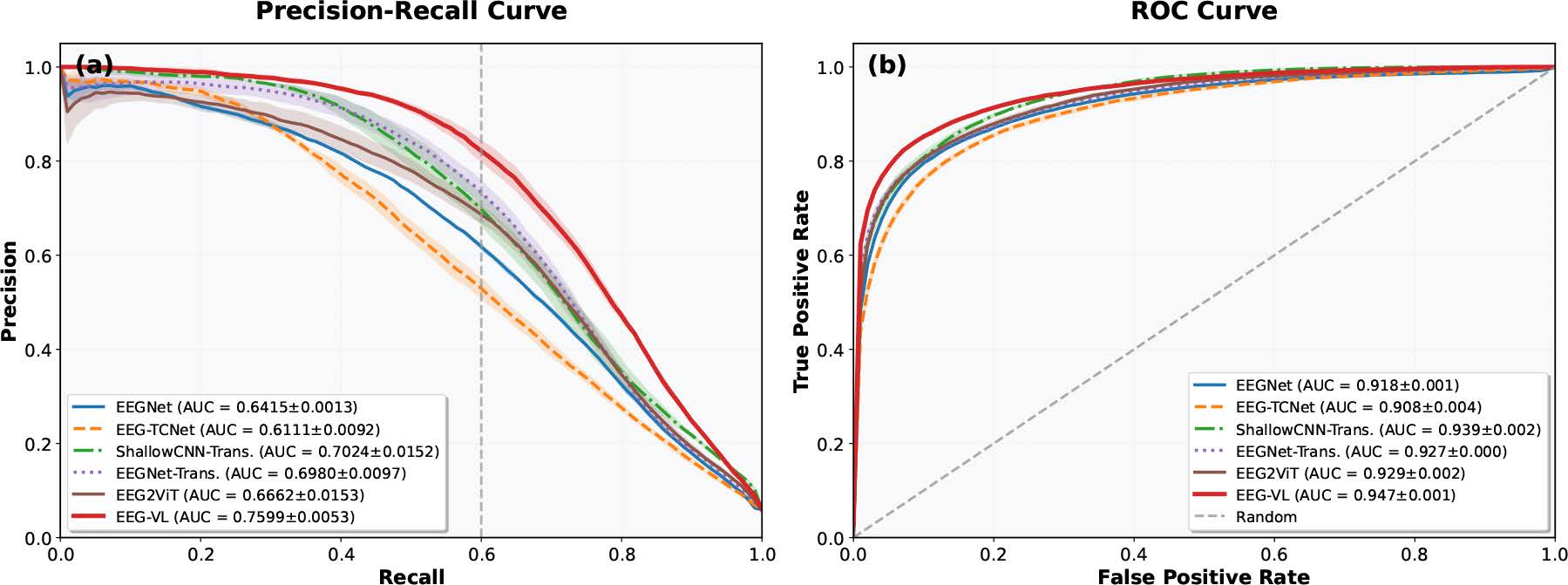

totally. We develop a VGG model

for IED detection trained and validated on the present dataset. The integration of consciousness

information improves

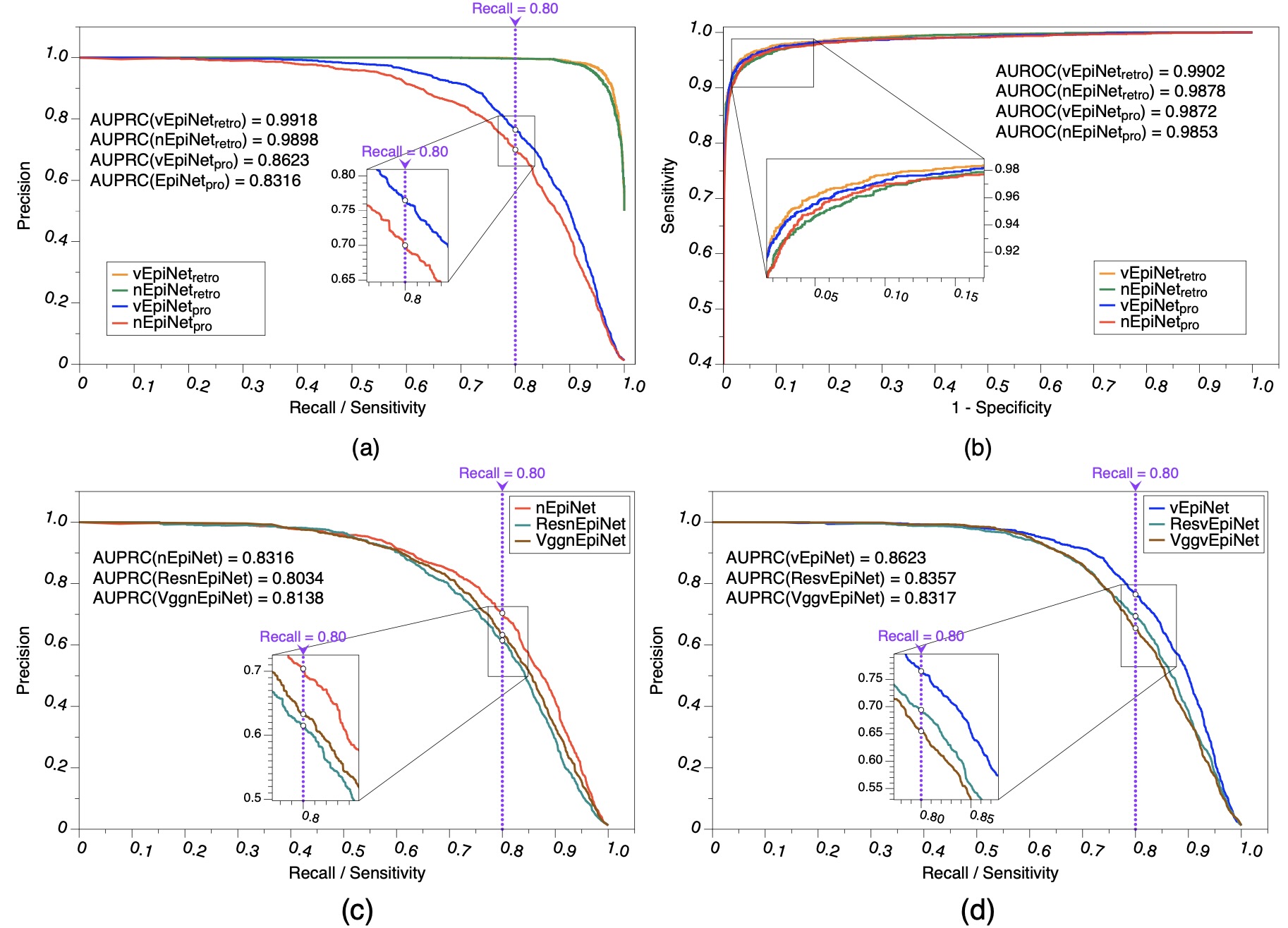

model performance, particularly at high levels of sensitivity. Furthermore, our dataset is

demonstrated to serve as a

robust tool for validating existing IED detection models and for automatically classifying IEDs

types based on spatial

distribution.

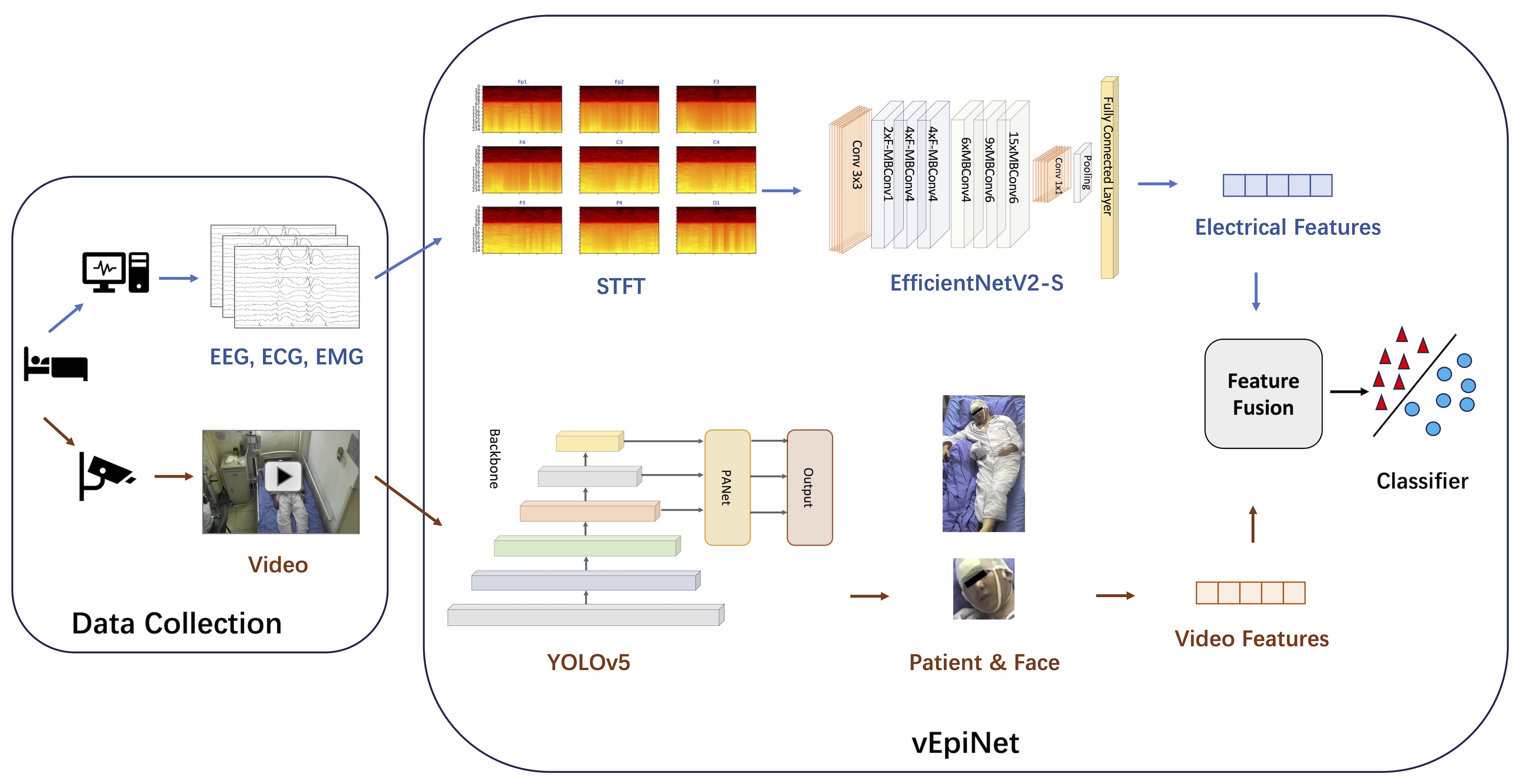

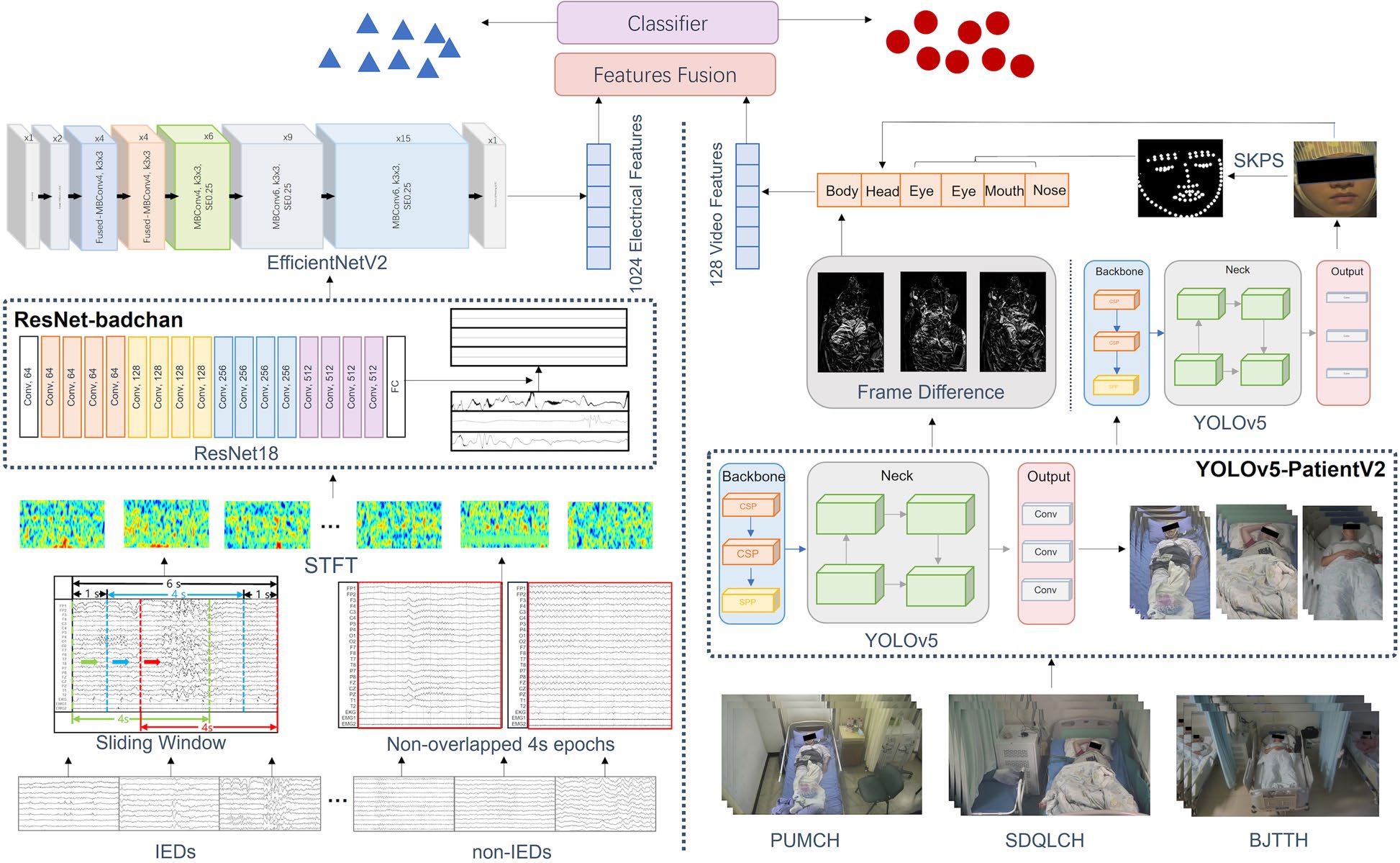

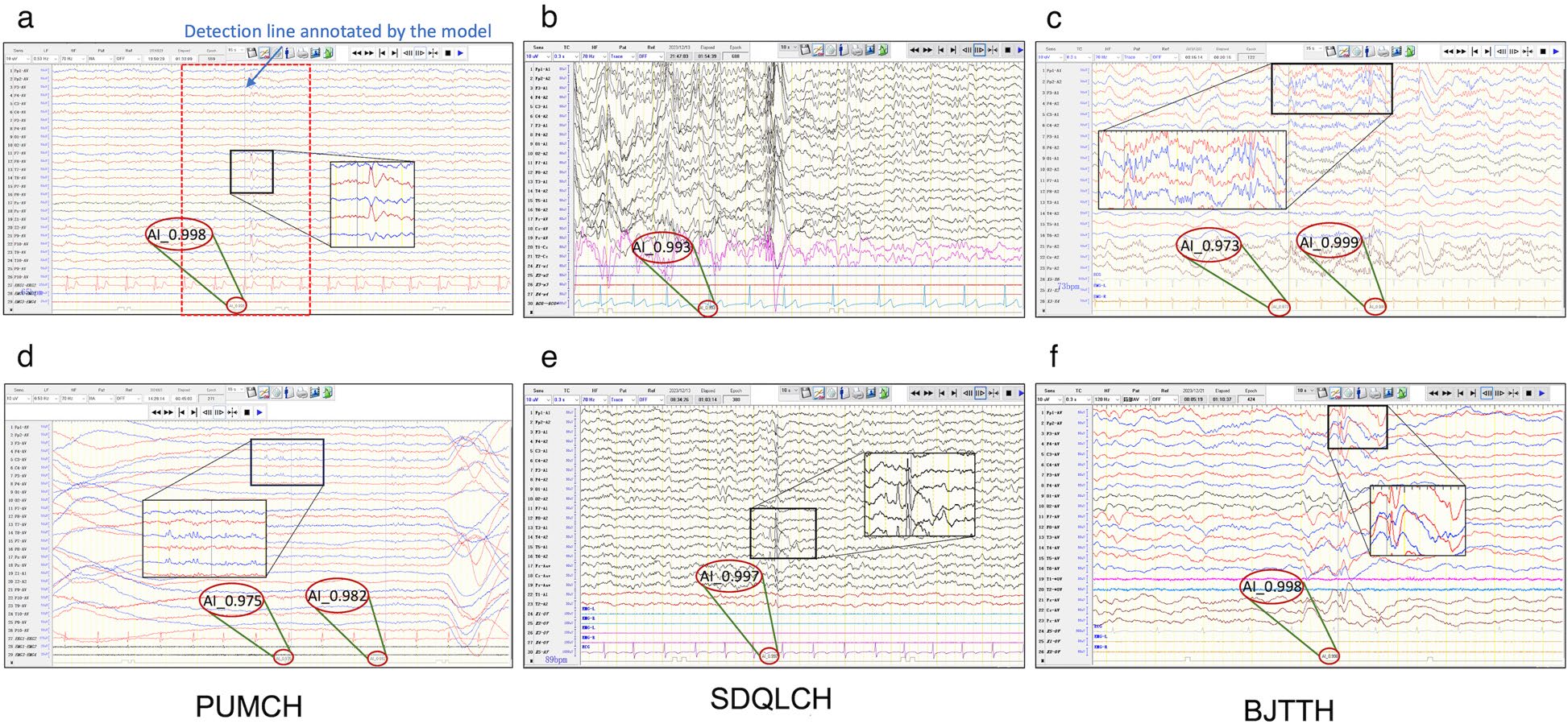

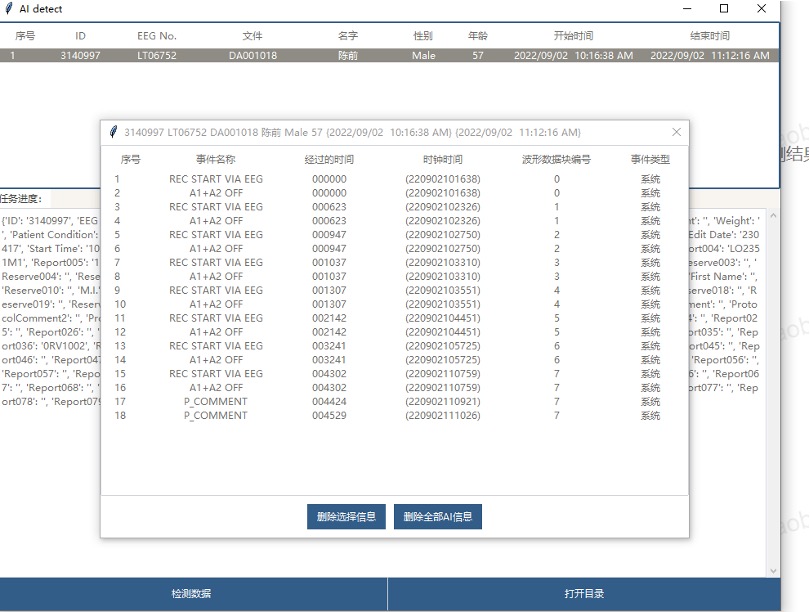

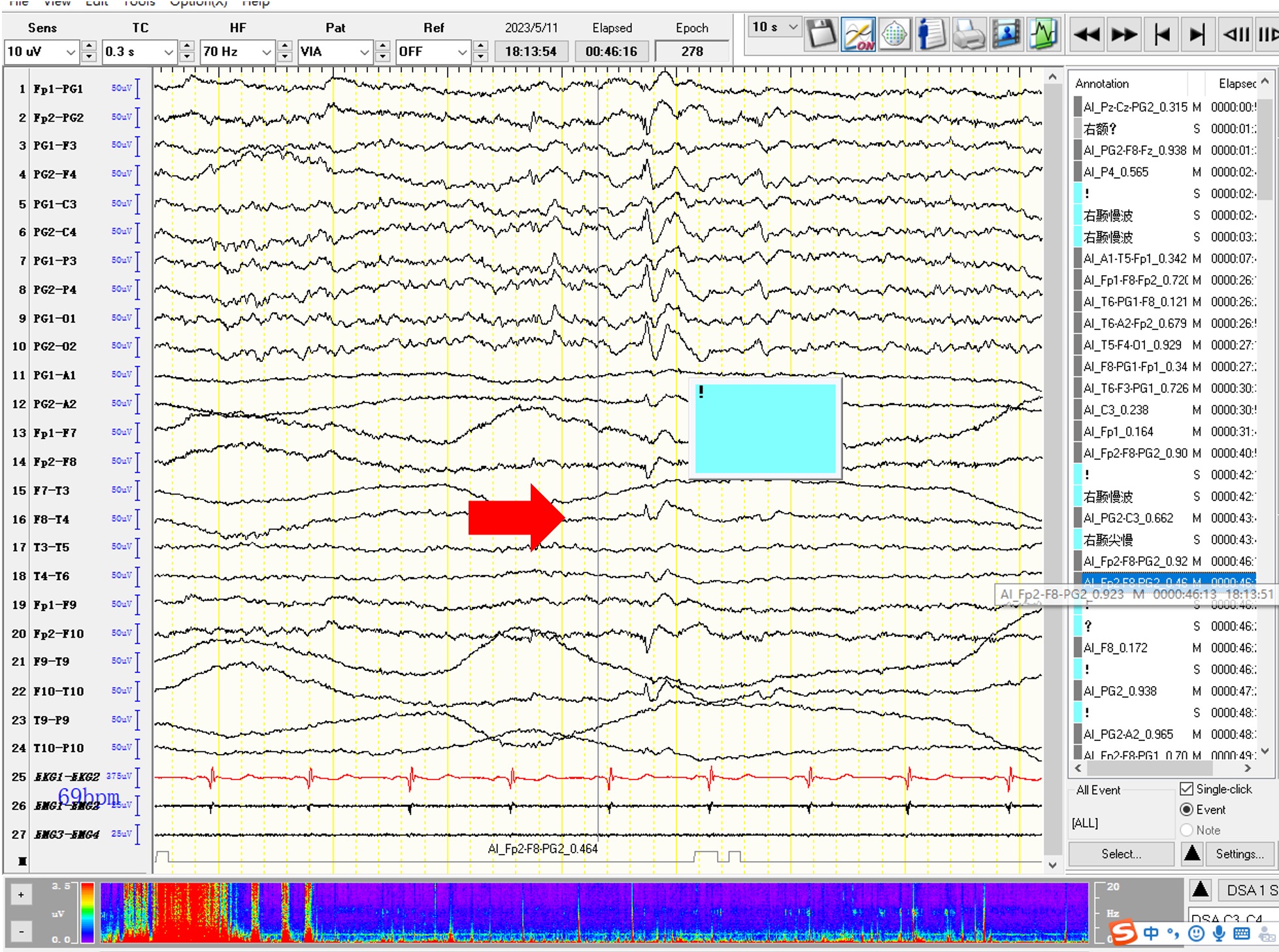

vEpiSpy is the first system to combine patient video and EEG data, fully simulating how physicians annotate EEG readings, thereby significantly improving the accuracy of AI-based EEG detection. In clinical trials, the system achieved specificity and sensitivity of 90% and 80% respectively, with a false positive rate below 40%, matching the reading level of human EEG experts. Additionally, vEpiSpy seamlessly integrates with hospital EEG software, allowing physicians to directly view AI-annotated EEG results without any additional operations. With vEpiSpy assistance, physicians' reading time has been reduced by one-third on average, improving efficiency by approximately 50% and greatly saving physician resources.